This is the multi-page printable view of this section. Click here to print.

Documentation

- 1: Overview

- 2: Getting Started

- 2.1: First time users

- 2.2: Apache Installation

- 2.3: Docker Installation

- 2.4: Nginx Installation

- 2.5: Customize Installation

- 2.6: Upgrade OpenDataBio

- 3: API services

- 3.1: Quick reference

- 3.2: GET data

- 3.3: Post data

- 3.4: Put data

- 4: Concepts

- 4.1: Core Objects

- 4.2: Trait Objects

- 4.3: Data Access Objects

- 4.4: Auxiliary Objects

- 5: Contribution Guidelines

- 6: Tutorials

- 6.1: Getting data with OpenDataBio-R

- 6.2: Import data with R

- 6.2.1: Import Locations

- 6.2.2: Import BibReferences

- 6.2.3: Import Vernacular

- 6.2.4: Import Media

- 6.2.5: Import Taxons

- 6.2.6: Import Persons

- 6.2.7: Import Traits

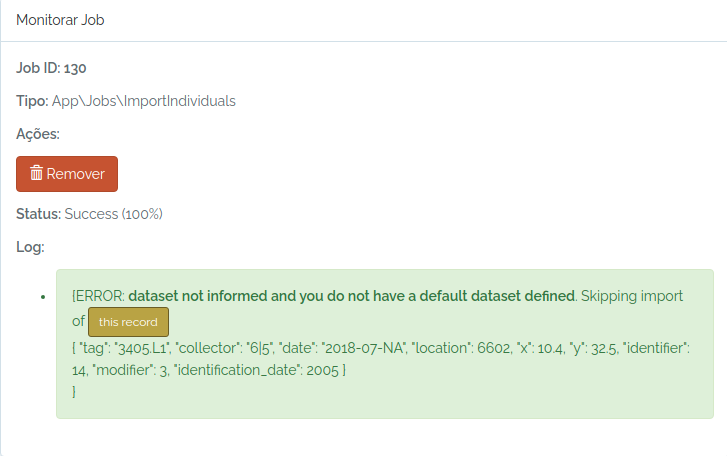

- 6.2.8: Import Individuals & Vouchers

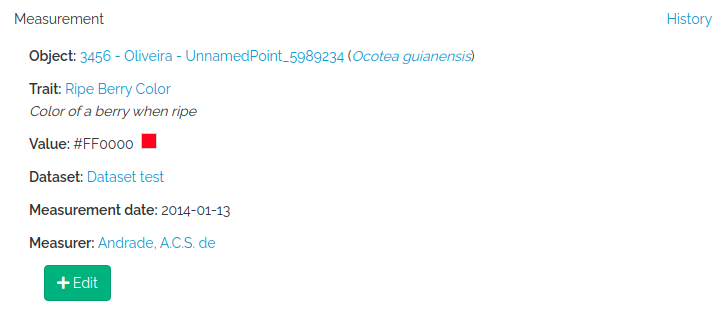

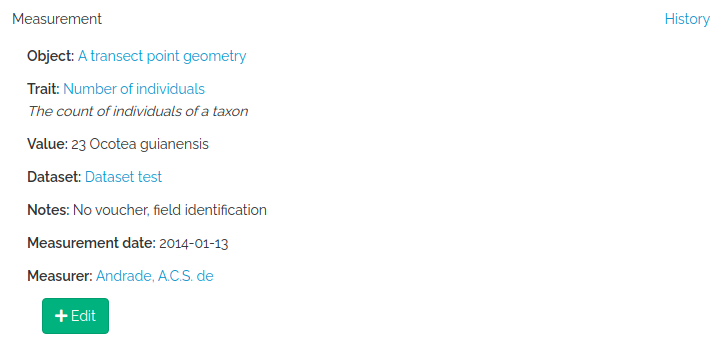

- 6.2.9: Import Measurements

1 - Overview

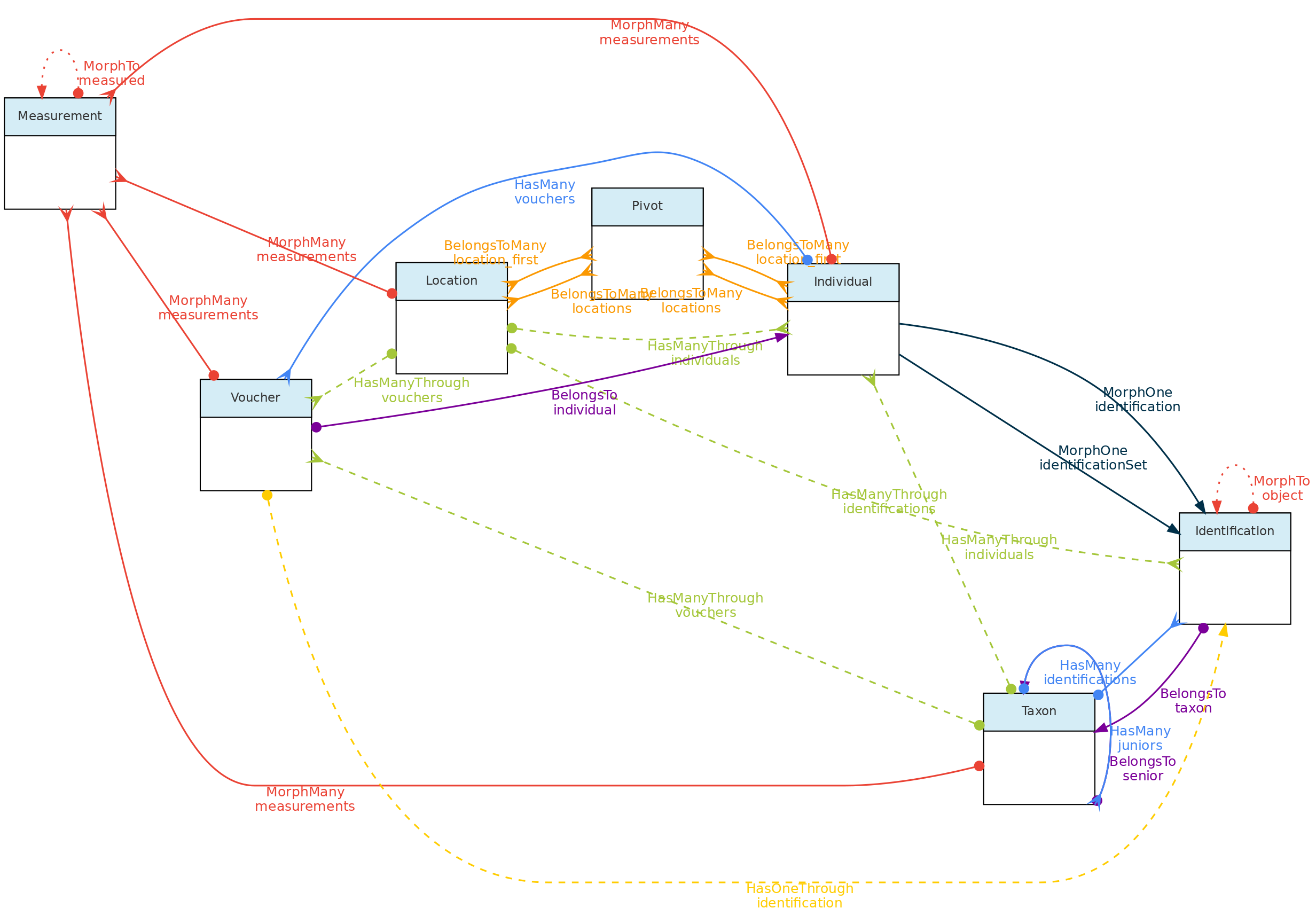

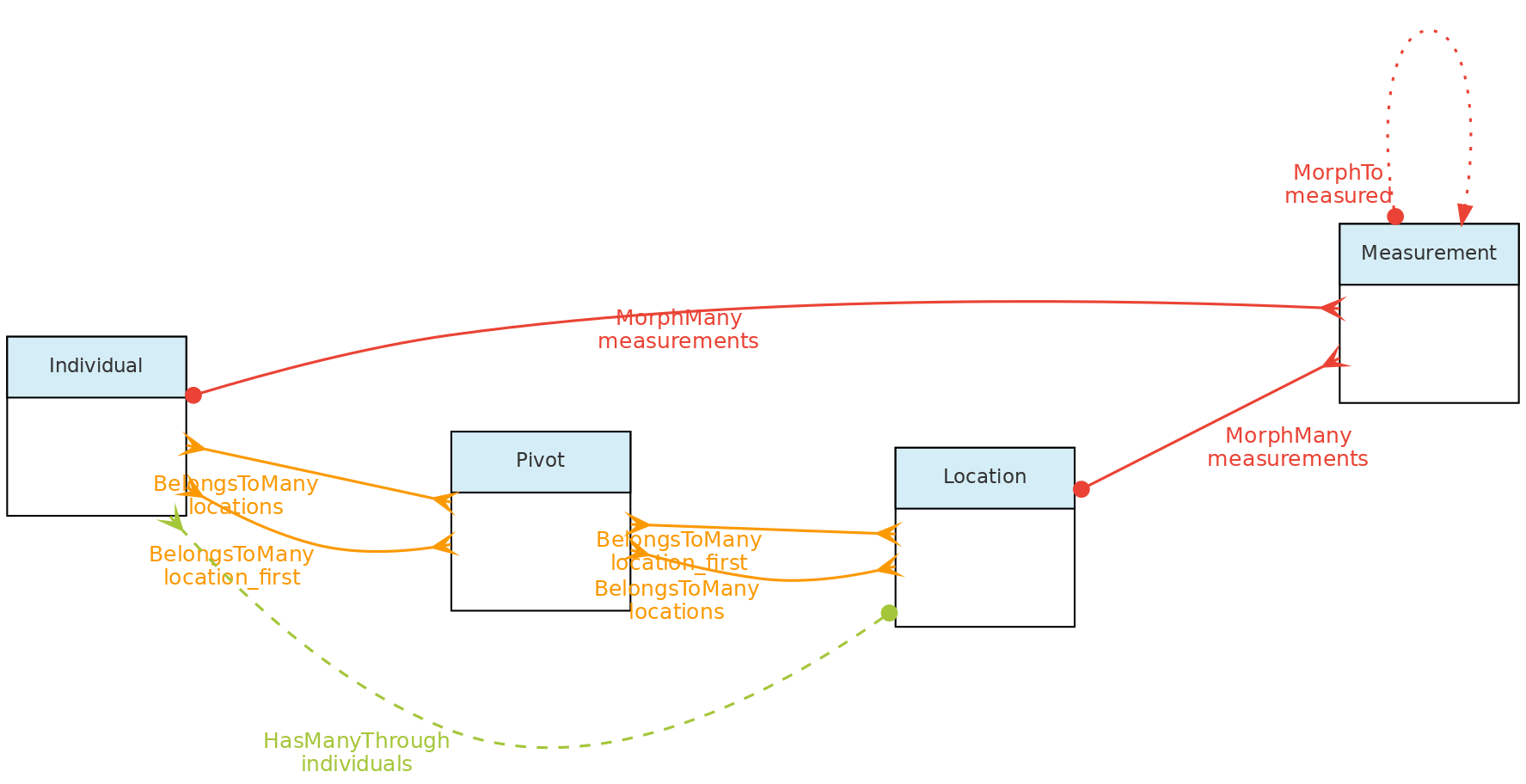

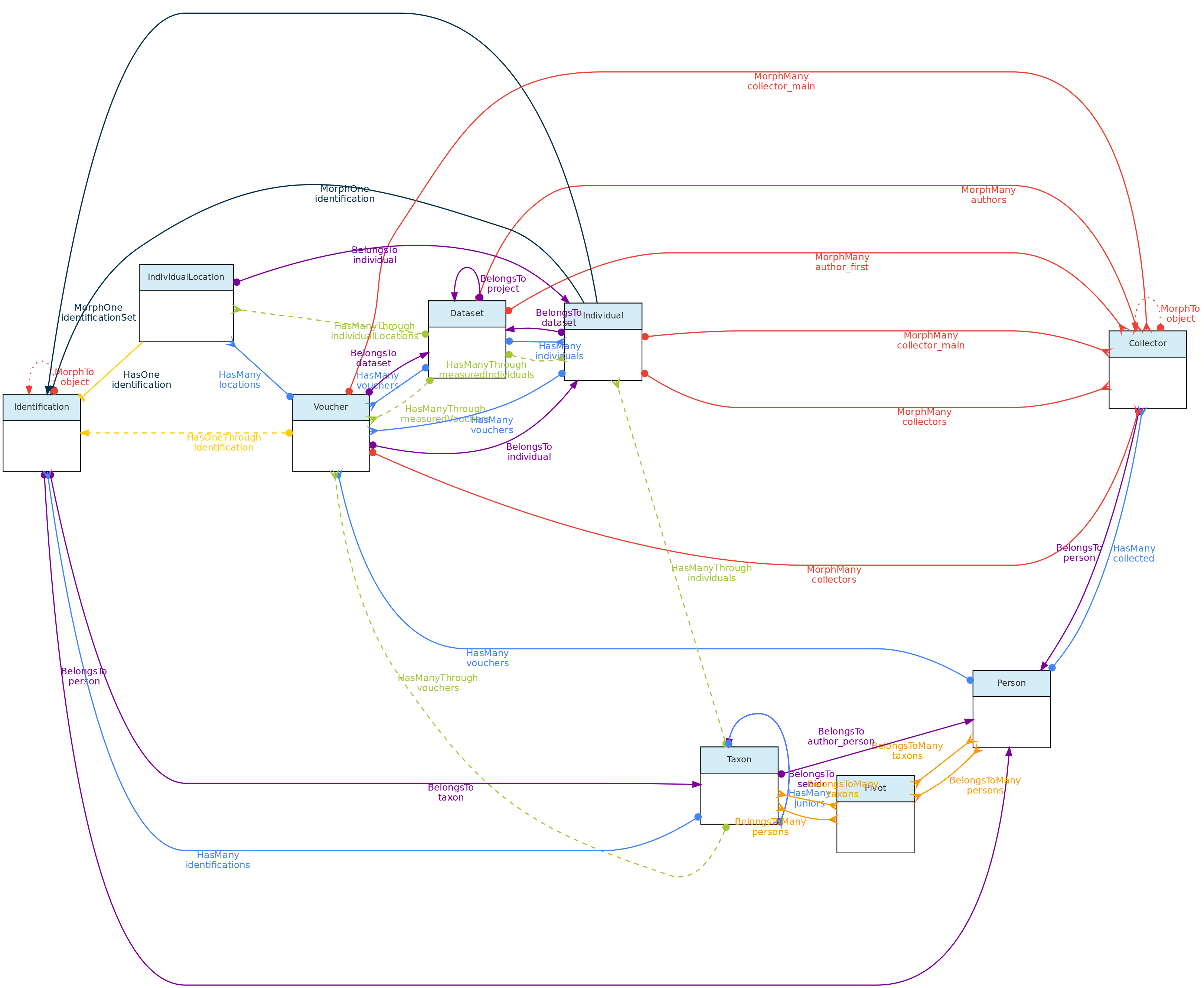

OpenDataBio is an opensource web-based platform designed to help researchers and organizations studying biodiversity in Tropical regions to collect, store, related and serve data. It is designed to accommodate many data types used in biological sciences and their relationships, particularly biodiversity and ecological studies, and serves as a data repository that allow users to download or request well-organized and documented research data.

Why?

Biodiversity studies frequently require the integration of a large amount of data, which require standardization for data use and sharing, and also continuous management and updates, particularly in Tropical regions where biodiversity is huge and poorly known.

OpenDataBio was designed based on the need to organize and integrate historical and current data collected in the Amazon region, taking into account field practices and data types used by ecologists and taxonomists.

OpenDataBio aim to facilitate the standardization and normalization of data, utilizing different API services available online, giving flexibility to user and user groups, and creating the necessary links among Locations, Taxons, Individuals, Vouchers and the Measurements and Media-files associated with them, while offering accessibility to the data through an API service, facilitating data distribution and analyses.

Main features

- Custom variables - the ability to define custom Traits, i.e. user defined variables of different types, including some special cases like Spectral Data, Colors, TaxonLinks and GeneBank. Measurements for such traits can be recorded for Individuals, Vouchers, Taxons and/or Locations.

- Taxons can be published or unpublished names (e.g. a morphotype), synonyms or valid names, and any node of the tree of life may be stored. Taxon insertion are checked against different nomenclature data sources (Tropicos, IPNI, MycoBank,ZOOBANK, GBIF), minimizing your search for correct spelling, authorship and synonyms.

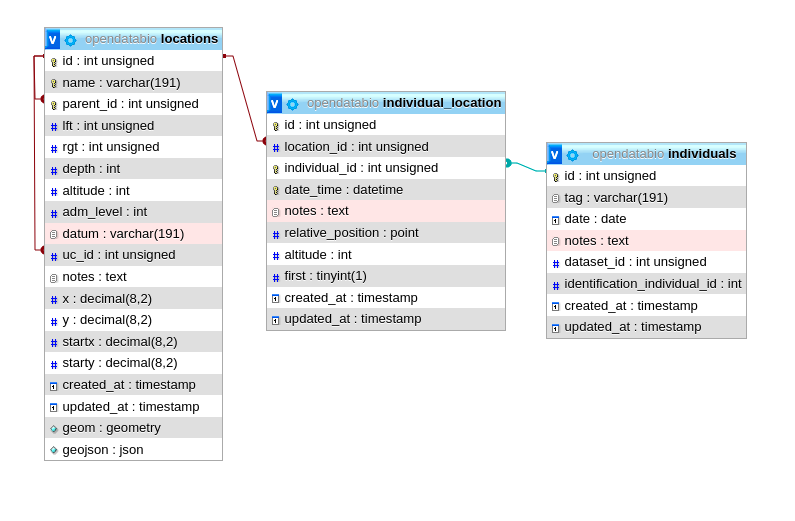

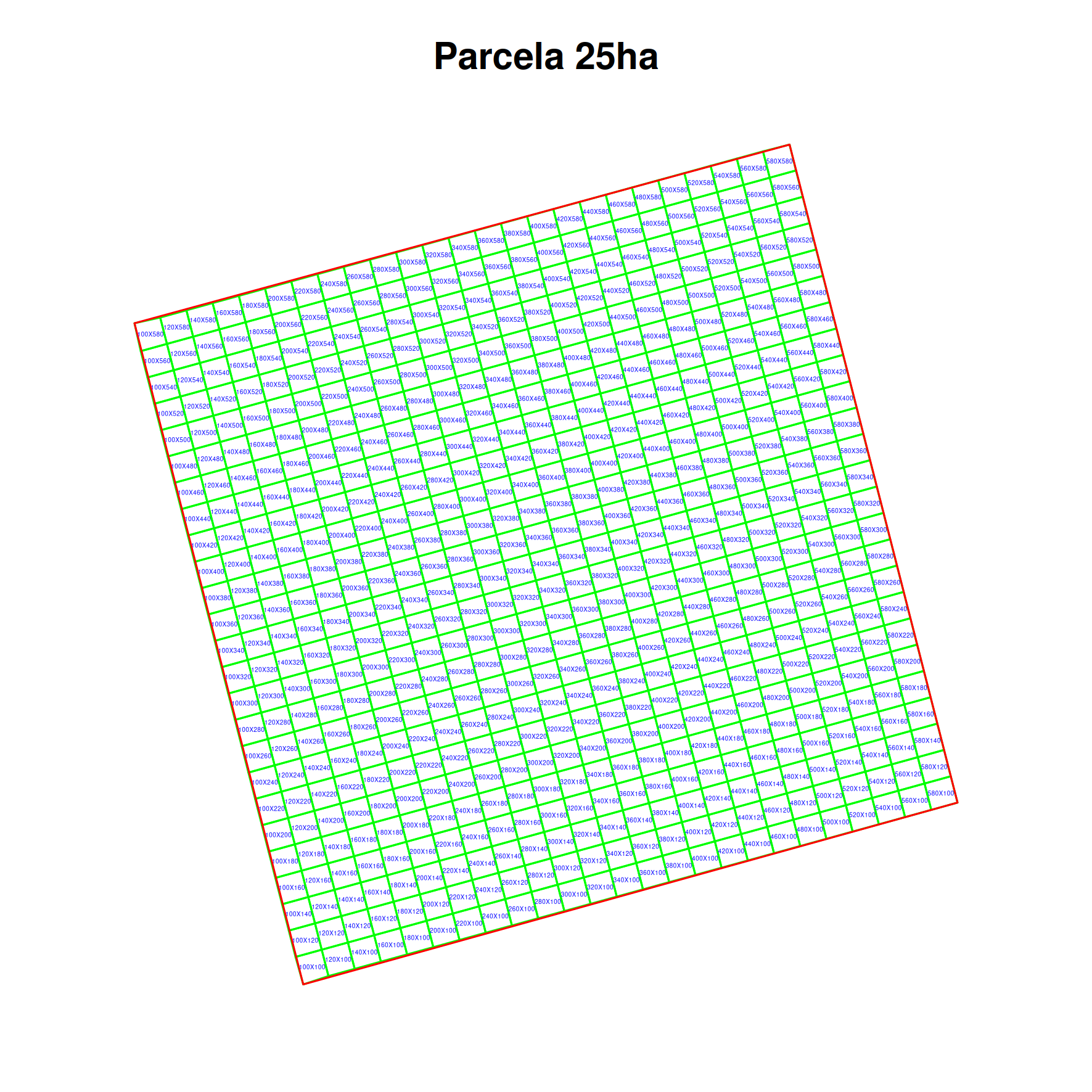

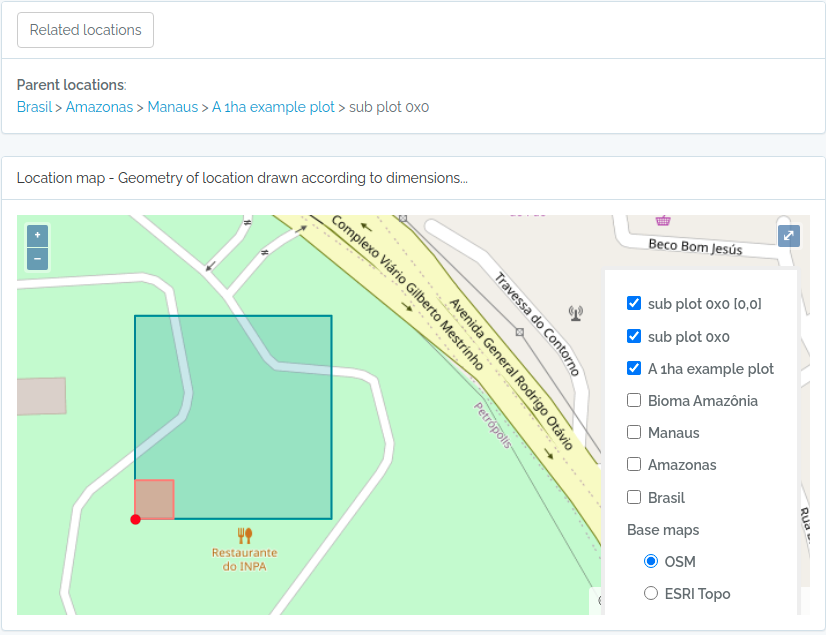

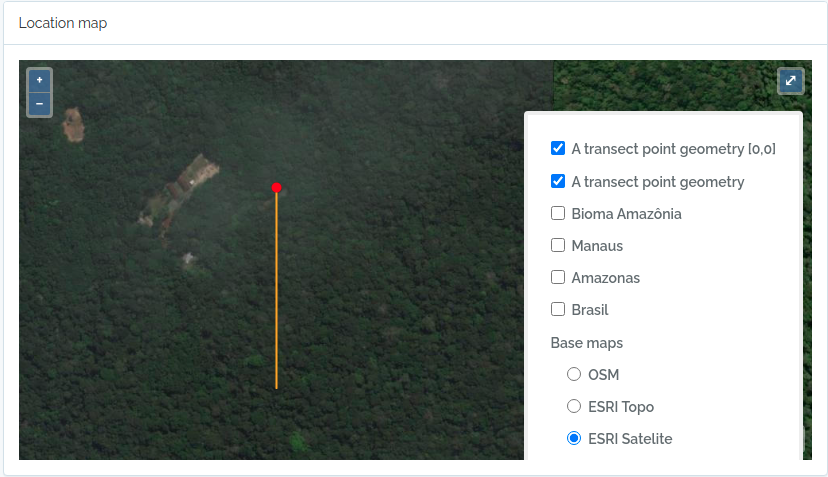

- Locations are stored with their spatial Geometries, allowing location parent detection and spatial queries. Special location types, such as Plots and Transects can be defined, facilitating commonly used methods in biodiversity studies

- Data access control - data are organized in Datasets that permits to define an access policy (public, non-public) and a license for distribution of public datasets, becoming a self-contained dynamic data publication, versioned by the last edit date.

- Different research groups may use a single OpenDataBio installation, having total control over their particular research data edition and access, while sharing common libraries such as Taxonomy, Locations, Bibliographic References and Trait definitions.

- API to access data programatically - Tools for data exports and imports are provided through API services along with a API client in the R language, the OpenDataBio-R package.

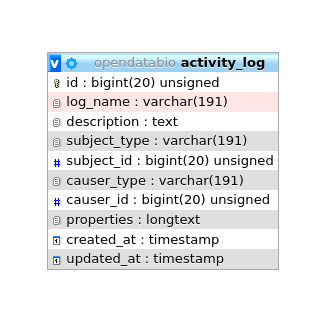

- Autiting - the Activity Model audits changes in any record and downloads of full datasets, which are logged for history tracking.

- The BioCollection model allows administrators of Biological Collections to manage their Voucher records as well as user-requests, facilitating the interaction with users providing samples and data.

- A mobile data collector is planned with ODK or ODK-X

Learn more

- Getting Started: install OpenDataBio!

- Nginx Installation: standalone nginx setup.

- FAQs: Check out some example code!

2 - Getting Started

OpenDataBio is a web-based software supported in Debian, Ubuntu and Arch-Linux distributions of Linux and may be implemented in any Linux based machine. We have no plans for Windows support, but it may be easy to install on a Windows machine using Docker.

OpenDataBio is written in PHP and developed with the Laravel framework. It requires a web server (Apache or nginx), PHP and a SQL database – tested only with MySQL and MariaDB.

You may install OpenDataBio easily using the Docker files included in the distribution. The repository now includes a docker/prod profile and docker-compose.prod.yml so Docker can also be used for production deployments (with server-specific tuning and secrets management).

If you just want to test OpenDataBio locally, follow the Docker Installation.

Next steps

Prep for installation

- You may want to request a Tropicos.org API key for OpenDataBio to be able to retrieve taxonomic data from the Tropicos.org database. If not provided, mainly the GBIF nomenclatural service will be used;

- OpenDataBio sends emails to registered users, either to inform about a Job that has finished, to send data requests to dataset administrators or for password recovery. You may use a Google Email for this, but will need to change the account security options to allow OpenDataBio to use the account to send emails (you need to turn on the

Less secure app accessoption in the Gmail My Account Page and will need to create a cron job to keep this option alive). Therefore, create a dedicated email address for your installation. Check the “config/mail.php” file for more options on how to send e-mails.

2.1 - First time users

OpenDataBio is software to be used online. Local installations are for testing or development, although it could be used for a single-user production localhost environment.

User roles

If you have registered you need someone to assign you a full user role, so you can enter data.

- If you are installing, the first login to an OpenDataBio installation must be done with the default super-admin user:

admin@example.organdpassword1. These settings should be changed or the installation will be open to anyone reading the docs; - Self-registrations only grant access to datasets with privacy set to

registered usersand allows user do download data of open-access, but do not allow the user to edit nor add data; - Only full users can contribute with data.

- But only super admin can grant

full-user roleto registered users - different OpenDataBio installations may have different policies as to how you may gain full-user access. Here is not the place to find that info.

See also User Model.

Prep your full-user account

- Register yourself as Person and assign it as your user default person, creating a link between your user and yourself as collector.

- You need at least a dataset to enter your own data

- When becoming a full-user, a restricted-access Dataset and Project will be automatically created for you (your Workspaces). You may modify these entities to fit your personal needs.

- You may create as many Projects and Datasets as needed. So, understand how they work and which data they control access to.

Entering data

There three main ways to import data into OpenDataBio:

- One by one through the web Interface

- Using the OpenDataBio POST API services:

- importing from a spreadsheet file (CSV, XLXS or ODS) using the web Interface

- using the OpenDataBio R package client

- When using the OpenDataBio API services you must prep your data or file to import according to the field options of the POST verb for the specific ’endpoint’ your are trying to import.

See the Import data with R Tutorial for examples on how to import data with API.

Tips for entering data

- If first time entering data, you should use the web interface and create at least one record for each model needed to fit your needs. Then play with the privacy settings of your Workspace Dataset, and check whether you can access the data when logged in and when not logged in.

- Use Dataset for a self-contained set of data that should be distributed as a group. Datasets are dynamic publications, have author, data, and title.

- Although ODB attempt to minimize redundancy, giving users flexibility comes with a cost, and some definitions, like that of Traits or Persons may receive duplicated entries. So, care must be taken when creating such records. Administrators may create a ‘code of conduct’ for the users of an ODB installation to minimize such redundancy.

- Follow an order for importation of new data, starting from the libraries of common use. For example, you should first register Locations, Taxons, Persons, Traits and any other common library before importing Individuals or Measurements

- There is no need to import POINT locations before importing Individuals because ODB creates the location for you when you inform latitude and longitude, and will detect for you to which parent location your individual belongs to. However, if you want to validate your points (understand where such point location will placed), you may use the Location API with

querytypeparameter specified for this. - There are different ways to create PLOT and TRANSECT locations - see here Locations if that is your case

- Creating Taxons require only the specification of a

name- ODB will search nomenclature services for you, find the name, metadata and parents and import all of the them if needed. If you are importing published names, just inform this single attribute. Else, if the name is unpublished, you need to inform additional fields. So, separate the batch importation of published and unpublished names into two sets. - The

notesfield of any model is for both plain text or JSON object string formatted data. The Json option allows you to store custom structured data any model having thenotes field. You may, for example, store as notes some secondary fields from original sources when importing data, but may store any additional data that is not provided by the ODB database structure. Such data will not be validate by ODB and standardization of both tags and values depends on you. Json notes will be imported and exported as a JSON string, and will be presented in the interface as a formatted table; URLs in your Json will be presented as links.

2.2 - Apache Installation

These instructions are for an apache-based installation. For nginx, use Nginx Installation.

Server requirements

- The supported PHP version >= 8.2 (8.3 recommended)

- Web server: apache for this guide. For nginx, use Nginx Installation.

- It requires a SQL database, MySQL and MariaDB have been tested, but may also work with Postgres. Tested with MySQL 8.0 and MariaDB 10.6+.

- PHP extensions required:

openssl,pdo,pdo_mysql,mbstring,tokenizer,xml,dom,gd,exif,bcmath,zip,curl,redis. - Redis Server is required for queues and cache.

- Tectonic is used for LaTeX/PDF label generation.

- Pandoc is used to translate LaTeX code used in bibliographic references. It is not necessary for installation, but suggested for a better user experience.

- Requires Supervisor, which is needed background jobs

Create Dedicated User

The recommended way to install OpenDataBio for production is using a dedicated system user. In this instructions this user is odbserver.

Download OpenDataBio

Login as your Dedicated User and download or clone this software to where you want to install it.

Here we assume this is /home/odbserver/opendatabio so that the installation files will reside in this directory. If this is not your path, change below whenever it applies.

Download OpenDataBio

Prep the Server

First, install the prerequisite software: Apache, MySQL, PHP, Redis, Tectonic, Pandoc and Supervisor. On a Debian system, you need to install some PHP extensions as well and enable them:

sudo apt-get install software-properties-common

sudo add-apt-repository ppa:ondrej/php

sudo add-apt-repository ppa:ondrej/php ppa:ondrej/apache2

sudo add-apt-repository ppa:ondrej/php

sudo add-apt-repository ppa:ondrej/apache2

sudo apt-get install mysql-server redis-server tectonic php8.3 libapache2-mod-php8.3 php8.3-intl \

php8.3-mysql php8.3-sqlite3 php8.3-gd php8.3-cli pandoc \

php8.3-mbstring php8.3-xml php8.3-bcmath php8.3-zip php8.3-curl php8.3-redis \

supervisor

sudo a2enmod php8.3

sudo phpenmod mbstring

sudo phpenmod xml

sudo phpenmod dom

sudo phpenmod gd

sudo a2enmod rewrite

sudo a2ensite

sudo systemctl restart apache2.service

#To check if they are installed:

php -m | grep -E 'mbstring|cli|xml|gd|mysql|redis|bcmath|pcntl|zip'

tectonic --version

redis-server --version

Add the following to your Apache configuration.

- Change

/home/odbserver/opendatabioto your path (the files must be accessible by apache) - You may create a new file in the sites-available folder:

/etc/apache2/sites-available/opendatabio.confand place the following code in it.

touch /etc/apache2/sites-available/opendatabio.conf

echo '<IfModule alias_module>

Alias /opendatabio /home/odbserver/opendatabio/public/

Alias /fonts /home/odbserver/opendatabio/public/fonts

Alias /images /home/odbserver/opendatabio/public/images

Alias /build /home/odbserver/opendatabio/public/build

Alias /vendor/livewire /home/odbserver/opendatabio/public/vendor/livewire

<Directory "/home/odbserver/opendatabio/public">

Require all granted

AllowOverride All

</Directory>

</IfModule>' > /etc/apache2/sites-available/opendatabio.conf

This will cause Apache to redirect all requests for / to the correct folder, and also allow the provided .htaccess file to handle the rewrite rules, so that the URLs will be pretty. If you would like to access the file when pointing the browser to the server root, add the following directive as well:

RedirectMatch ^/$ /

Content Security Policy (CSP) for Apache

Configure CSP at the web server layer (not in Laravel files). Apply it first in report-only mode, inspect logs, then switch to enforced mode.

For nginx standalone installs, use Nginx Installation.

Apache: where to put it

- Enable required module:

sudo a2enmod headers

sudo systemctl restart apache2

- Edit your active vhost file (example):

sudo nano /etc/apache2/sites-available/opendatabio.conf

- Inside the correct

<VirtualHost ...>block (HTTP and/or HTTPS), add:

Header always set Content-Security-Policy-Report-Only "

default-src 'self';

base-uri 'self';

form-action 'self';

frame-ancestors 'self';

object-src 'none';

script-src 'self' 'unsafe-eval';

style-src 'self' 'unsafe-inline';

img-src 'self' data: https://server.arcgisonline.com https://*.tile.openstreetmap.org;

font-src 'self' data:;

connect-src 'self';

"

- Reload Apache:

sudo apachectl configtest

sudo systemctl reload apache2

Subpath installs (/opendatabio)

If your installation runs under a subpath (for example http://localhost/opendatabio), set in .env:

APP_URL=http://localhost/opendatabio

ASSET_URL=http://localhost/opendatabio

Then refresh generated assets and Livewire files:

php artisan livewire:publish --assets

php artisan optimize:clear

npm run build

Notes

https://server.arcgisonline.comandhttps://*.tile.openstreetmap.orgare needed for map tiles.unsafe-inline/unsafe-evalare temporary compatibility flags; remove after hardening templates/assets.- Keep

Report-Onlywhile tuning policy in production.

Configure your php.ini file. The installer may complain about missing PHP extensions, so remember to activate them in both the cli (/etc/php/8.3/cli/php.ini) and the web ini (/etc/php/8.3/fpm/php.ini) files for PHP!

Update the values for the following variables:

Find files:

php -i | grep 'Configuration File'

Change in them:

memory_limit should be at least 512M

post_max_size should be at least 30M

upload_max_filesize should be at least 30M

Something like:

[PHP]

allow_url_fopen=1

memory_limit = 512M

post_max_size = 100M

upload_max_filesize = 100M

Enable the Apache modules ‘mod_rewrite’ and ‘mod_alias’ and restart your Server:

sudo a2enmod rewrite

sudo a2ensite

sudo systemctl restart apache2.service

Mysql Charset and Collation

- You should add the following to your configuration file (mariadb.cnf or my.cnf), i.e. the Charset and Collation you choose for your installation must match that in the ‘config/database.php’

[mysqld]

character-set-client-handshake = FALSE #without this, there is no effect of the init_connect

collation-server = utf8mb4_unicode_ci

init-connect = "SET NAMES utf8mb4 COLLATE utf8mb4_unicode_ci"

character-set-server = utf8mb4

log-bin-trust-function-creators = 1

sort_buffer_size = 256M #large enough for geometry sort operations

[mariadb] or [mysql]

max_allowed_packet=100M

innodb_log_file_size=300M #no use for mysql

- If using MariaDB and you still have problems of type #1267 Illegal mix of collations, then check here on how to fix that,

Configure supervisord

Configure Supervisor, which is required for jobs. Create a file name opendatabio-worker.conf in the Supervisor configuration folder /etc/supervisor/conf.d/opendatabio-worker.conf with the following content:

touch /etc/supervisor/conf.d/opendatabio-worker.conf

echo ";--------------

[program:opendatabio-worker]

process_name=%(program_name)s_%(process_num)02d

command=php /home/odbserver/opendatabio/artisan queue:work --sleep=3 --tries=1 --timeout=0 --memory=512

autostart=true

autorestart=true

user=odbserver

numprocs=8

redirect_stderr=true

stdout_logfile=/home/odbserver/opendatabio/storage/logs/supervisor.log

;--------------" > /etc/supervisor/conf.d/opendatabio-worker.conf

Folder permissions

Folder and file permissions are important for securing a public server installation. If you don’t set them correctly, your site may be at risk.

- Folders

storageandbootstrap/cachemust be writable by the Server user (usually www-data). Set0755permission to these directories. - Config

.envfile requires0640permission. - This link has different ways to set up permissions for files and folders of a Laravel application. Below the preferred method:

cd /home/odbserver

#give write permissions to odbserver user and the apache user

sudo chown -R odbserver:www-data opendatabio

sudo find ./opendatabio -type f -exec chmod 644 {} \;

sudo find ./opendatabio -type d -exec chmod 755 {} \;

#in these folders the server stores data and files.

#Make sure their permission is correct

cd /home/odbserver/opendatabio

sudo chgrp -R www-data storage bootstrap/cache

sudo chmod -R ug+rwx storage bootstrap/cache

#make sure media folder has the correct permissions

sudo find ./storage/app/public/media -type f -exec chmod 664 {} \;

sudo find ./storage/app/public/media -type d -exec chmod 775 {} \;

#make sure the .env file has 640 permission

sudo chmod 640 ./.env

Install OpenDataBio

- Many Linux distributions (most notably Ubuntu and Debian) have different php.ini files for the command line interface and the Apache plugin. It is recommended to use the configuration file for Apache when running the install script, so it will be able to correctly point out missing extensions or configurations. To do so, find the correct path to the .ini file, and export it before using the

php installcommand.

For example,

export PHPRC=/etc/php/8.3/apache2/php.ini

The installation script will download the Composer dependency manager and all required PHP libraries listed in the

composer.jsonfile. However, if your server is behind a proxy, you should install and configure Composer independently. We have implemented PROXY configuration, but we are not using it anymore and have not tested properly (if you require adjustments, place an issue on GitLab).The script will prompt you configurations options, which are stored in the environment

.envfile in the application root folder.

You may, optionally, configure this file before running the installer:

- Create a

.envfile with the contents of the providedcp .env.example .env - Read the comments in this file and adjust accordingly.

- Make sure

assets_urlis correct for your deployment URL/subpath.

- Run the installer:

cd /home/odbserver/opendatabio

php install

- Build frontend assets after

.envis configured (required whenassets_urlis added or changed):

npm run build

- Seed data - the script above will ask if you want to install seed data for Locations and Taxons - seed data is version specific. Check the seed data repository version notes.

If the install script finishes with success, you’re good to go! Point your browser to http://localhost/opendatabio. The database migrations include an administrator account, with login admin@example.org and password password1. Change the password after installing.

Installation issues

There are countless possible ways to install the application, but they may involve more steps and configurations.

- if you browser return 500|SERVER ERROR you should look to the last error in

storage/logs/laravel.log. If you have ERROR: No application encryption key has been specified run:

php artisan key:generate

php artisan config:cache

- If you receive the error “failed to open stream: Connection timed out” while running the installer, this indicates a misconfiguration of your IPv6 routing. The easiest fix is to disable IPv6 routing on the server.

- If you receive errors during the random seeding of the database, you may attempt to remove the database entirely and rebuild it. Of course, do not run this on a production installation.

php artisan migrate:fresh

- You may also replace the Locations and Taxons tables with seed data after a fresh migration using:

php seedodb

Post-install configs

- If your import/export jobs are not being processed, make sure Supervisor is running

systemctl start supervisord && systemctl enable supervisord, and check the log files atstorage/logs/supervisor.log. - You can change several configuration variables for the application. The most important of those are probably set

by the installer, and include database configuration and proxy settings, but many more exist in the

.envandconfig/app.phpfiles. In particular, you may want to change the language, timezone and e-mail settings. Runphp artisan config:cacheafter updating the config files. - In order to stop search engine crawlers from indexing your database, add the following to your “robots.txt” in your server root folder (in Debian, /var/www/html):

User-agent: *

Disallow: /

Updating an existing Apache installation

Before updating, back up your database, .env, and storage/app/public/media.

Before running commands, review config diffs for the target version:

- Compare

.envwith.env.example(includingassets_url) - Check PHP settings (

php.iniin CLI and FPM/Apache) - Check Supervisor worker settings

- Put the application in maintenance mode:

cd /home/odbserver/opendatabio

php artisan down

- Update source code to the target version:

git fetch --tags

git checkout <target-tag-or-branch>

- Update dependencies and apply database migrations:

composer install --no-dev --optimize-autoloader

php artisan migrate:status

php artisan migrate --force

- Rebuild frontend assets after

.envupdates:

npm run build

- Refresh caches and restart queue workers:

php artisan optimize:clear

php artisan config:cache

php artisan queue:restart

echo "" > storage/logs/laravel.log

- Bring the application back online:

php artisan up

If the target version includes new environment variables (compare yours with the contents of .env.example), add them to .env before running asset/cache commands.

Storage & Backups

You may change storage configurations in config/filesystem.php, where you may define cloud based storage, which may be needed if have many users submitting media files, requiring lots of drive space.

- Data downloads are queue as jobs and a file is written in a temporary folder, and the file is deleted when the job is deleted by the user. This folder is defined as the

download diskin filesystem.php config file, which point tostorage/app/public/downloads. UserJobs web interface difficult navigation will force users to delete old jobs, but a cron cleaning job may be advisable to implement in your installation; - Media files are by default stored in the

media disk, which place files in folderstorage/app/public/media; - For regular configuration create both directories

storage/app/public/downloadsandstorage/app/public/mediawith writable permissions by the Server user, see below topic; - Remember to include media folder in a backup plan;

2.3 - Docker Installation

The easiest way to install and run OpenDataBio is using Docker and the docker configuration files provided, which contain the required configuration to run OpenDataBio. It uses nginx, MySQL, and Supervisor for queues

By default, the quick-start flow is optimized for development and local testing.

Production profile

OpenDataBio now ships a production-oriented Docker profile:

docker/prod/nginx.confdocker/prod/php.inidocker/prod/www.confdocker-compose.prod.yml

Run production compose with:

docker compose -f docker-compose.prod.yml build

docker compose -f docker-compose.prod.yml up -d

Key differences from dev:

- Uses

docker/prod/*nginx/php-fpm configs. - Removes source bind-mounts for app code.

- Disables phpMyAdmin by default (

dev-onlyprofile). - Publishes nginx on port

80(adjust if behind reverse proxy).

CSP in nginx (report-only) is included in docker/prod/nginx.conf. Keep report-only first, then enforce after validation.

Docker files

laravel-app/

----docker/*

----.env.docker

----docker-compose.yml

----Dockerfile

----Makefile

These are adapted from this link, where you find a production setting as well.

Installation

Download OpenDataBio

Prerequisites

- Docker with Compose plugin (

docker composev2). - Linux/mac: user in the docker group or run with

sudo. - Windows: Docker Desktop (WSL2/Hyper-V enabled).

Quick start (Linux/mac, requires make)

cd opendatabio

make docker-init # copies .env.docker, builds/starts, installs composer, key, migrates, storage:link

make docker-init SEED=1 # same as above + optional seed for Locations/Taxons

- After configuring

.env(or wheneverASSET_URLchanges), rebuild assets:

npm ci #may need this in production

npm run build

- App: http://localhost:8081 (user

admin@example.org/password1) - phpMyAdmin: http://localhost:8082

Windows (PowerShell)

cd opendatabio

powershell -ExecutionPolicy Bypass -File scripts/docker-init.ps1

# optional seed

powershell -ExecutionPolicy Bypass -File scripts/docker-init.ps1 -Seed

Manual commands (if you do not have make installed)

cp .env.docker .env

docker compose up -d

docker compose exec -T -u www-data laravel composer install --optimize-autoloader

docker compose exec -T -u www-data laravel php artisan key:generate --force

docker compose exec -T -u www-data laravel php artisan migrate --force

docker compose exec -T -u www-data laravel php artisan storage:link

Optional seed without make:

docker compose exec -T -u www-data laravel php getseeds

docker exec -i odb_mysql mysql -uroot -psecret odbdocker < storage/Location*.sql

docker exec -i odb_mysql mysql -uroot -psecret odbdocker < storage/Taxon*.sql

rm storage/Location*.sql storage/Taxon*.sql

Data persistence

The docker images may be deleted without loosing any data. The mysql tables are stored in a volume. You may change to a local path bind.

docker volume list

Using

The Makefile file contains the following commands to interact with the docker containers and odb.

Commands to build and create the app

make docker-init- copy.env.docker(if missing), build/start containers, install composer, key, migrate, storage:linkmake build- build containersmake key-generate- generate the app key and adds to .envmake composer-install- install php dependenciemake composer-update- update php dependenciesmake composer-dump-autoload- execute composer dump-autoload within containermake migrate- create or update the databasemake drop-migrate- delete and recreate the databasemake seed-odb- seed the database with locations and taxons

Commands to access the docker containers

make start- start all containersmake stop- stop all containersmake restart- restart all containersmake ssh- enter the main laravel app containermake ssh-mysql- enter the mysql container, so you may the log to the database usingmysql -uUSER -pPWDmake mysql- enter the docker mysql consolemake ssh-nginx- enter the nginx containermake ssh-supervisord- enter the supervisord container

Maintenance commands

make optimize- clean caches and log filesmake info- show app infomake logs- show laravel logsmake logs-mysql- show mysql logsmake logs-nginx- show nginx logsmake logs-supervisord- show supervisor logs

Deleting & rebuilding

If you have issues and changed the docker files, you may need to rebuild:

#delete all images without loosing data

make stop #first stop all

docker system prune -a #and accepts Yes

make build

make start

Updating an existing Docker installation

Before updating, back up your database and storage/app/public/media.

Before running commands, review config diffs for the target version:

- Compare

.envwith.env.example(includingassets_url) - Check PHP settings from the target profile (

docker/prod/php.inior your custom PHP config) - Check Supervisor settings (

docker/supervisord.confor your deployment equivalent)

- Update source code to the target version:

cd opendatabio

git fetch --tags

git checkout <target-tag-or-branch>

- Rebuild and restart containers:

make stop

make build

make start

- Update PHP dependencies and run database migrations:

make composer-install

make migrate

- Rebuild frontend assets after

.envupdates:

npm run build

- Refresh Laravel caches and restart queue workers:

make optimize

docker compose exec -T -u www-data laravel php artisan queue:restart

If the new version introduces changes in .env, add the new keys before asset/cache/worker refresh in production.

2.4 - Nginx Installation

These instructions are for an nginx-based installation. If you prefer Apache, use the Apache installation page.

Server requirements

- Supported PHP version >= 8.2 (8.3 recommended).

- Web server: nginx.

- SQL database: MySQL or MariaDB (tested with MySQL 8.0 and MariaDB 10.6+).

- Required PHP extensions:

openssl,pdo,pdo_mysql,mbstring,tokenizer,xml,dom,gd,exif,bcmath,zip,curl,redis. - Redis for queues/cache.

- Tectonic for label PDF generation.

- Pandoc for bibliographic rendering (recommended).

- Supervisor for background jobs.

Nginx site config

Create your site config file (example):

sudo nano /etc/nginx/sites-available/opendatabio

Use this base server block (adjust paths/domain):

server {

listen 80;

server_name your-domain.example;

root /home/odbserver/opendatabio/public;

index index.php index.html;

charset utf-8;

client_max_body_size 300M;

location / {

try_files $uri $uri/ /index.php?$query_string;

}

location ~ \.php$ {

try_files $uri =404;

fastcgi_split_path_info ^(.+\.php)(/.+)$;

fastcgi_pass unix:/var/run/php/php8.3-fpm.sock;

fastcgi_index index.php;

include fastcgi_params;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

fastcgi_param PATH_INFO $fastcgi_path_info;

fastcgi_read_timeout 300;

}

location ~ /\. {

deny all;

}

}

Enable and reload:

sudo ln -s /etc/nginx/sites-available/opendatabio /etc/nginx/sites-enabled/opendatabio

sudo nginx -t

sudo systemctl reload nginx

Content Security Policy (CSP)

Edit the same nginx site file and add inside the server { ... } block:

add_header Content-Security-Policy-Report-Only "

default-src 'self';

base-uri 'self';

form-action 'self';

frame-ancestors 'self';

object-src 'none';

script-src 'self' 'unsafe-eval';

style-src 'self' 'unsafe-inline';

img-src 'self' data: https://server.arcgisonline.com https://*.tile.openstreetmap.org;

font-src 'self' data:;

connect-src 'self';

" always;

Then reload:

sudo nginx -t

sudo systemctl reload nginx

Notes:

- Start with

Report-Only, then move to enforced CSP after validating logs. https://server.arcgisonline.comandhttps://*.tile.openstreetmap.orgare required for map tiles.

Subpath installs (/opendatabio)

If your installation runs under a subpath (for example http://localhost/opendatabio), set in .env:

APP_URL=http://localhost/opendatabio

ASSET_URL=http://localhost/opendatabio

Then refresh generated assets and Livewire files:

php artisan livewire:publish --assets

php artisan optimize:clear

npm run build

Shared application setup

To avoid repeating the same instructions, use these sections from Apache installation (they also apply to nginx deployments):

- PHP settings (php.ini) in Apache Installation

- Configure supervisord in Apache Installation

- Folder permissions in Apache Installation

- Install OpenDataBio in Apache Installation

- Post-install configs in Apache Installation

2.5 - Customize Installation

Simple changes that can be implemented in the layout of a OpenDataBio web site

Logo and BackGround Image

To replace the Navigation bar logo and the image of the landing page,

just put your image files replacing the files in /public/custom/ without changing their names.

Texts and Info

To change the welcome text of the landing page, change the values of the array keys in the following files:

/resources/lang/en/customs.php/resources/lang/pt-br/customs.php- Do not remove the entry keys. Set to null to suppress from appearing in the footer and landing page.

Local Documentation

You can add documentation in *.md format to the repository in files located in the following folders:

/resources/docs/en/*/resources/docs/pt/*

This space is reserved for administrators to set documentation and custom directives for users of a specific OpenDataBio installation. For example, this is a place to include a code of conduct for users, information on who to contact to become a full user, specific tutorials, and so on.

NavBar and Footer

- If you want to change the color of the top navigation bar and the footer,

just replace css Boostrap 5 class in the corresponding tags and files in folder

/resources/view/layout. - You may add additional html to the footer and navbar, change logo size, etc… as you wish.

2.6 - Upgrade OpenDataBio

Use this guide to upgrade an existing OpenDataBio installation with minimal downtime.

Before you start

- Read the target release notes and confirm any breaking changes.

- Back up at least:

- Database dump

.envstorage/app/public/media

- Compare current config files against target-version templates/settings:

.envagainst.env.example(includingassets_url)- Supervisor worker config (

/etc/supervisor/conf.d/opendatabio-worker.confor container equivalent) - PHP config (

php.inifor CLI and FPM/Apache)

- Plan a maintenance window for production.

Upgrade (Apache or nginx installation)

- Put application in maintenance mode:

cd /home/odbserver/opendatabio

php artisan down

- Update source code:

git fetch --tags

git checkout <target-tag-or-branch>

- Install dependencies and run migrations:

composer install --no-dev --optimize-autoloader

php artisan migrate:status

php artisan migrate --force

- Rebuild frontend assets after

.envchanges (required whenassets_urlchanges):

npm run build

- Refresh caches, clean logs, and restart workers:

php artisan optimize:clear

php artisan config:cache

php artisan queue:restart

systemctl restart supervisor.service, nginx+phpfpm or apache, mysql or mariadb

echo "" > storage/logs/laravel.log

echo "" > storage/logs/supervisor.log

- Bring app back online:

php artisan up

Upgrade (Docker installation)

- Update source code:

cd opendatabio

git fetch --tags

git checkout <target-tag-or-branch>

- Rebuild and restart containers:

make stop

make build

make start

- Install dependencies and run migrations:

make composer-install

make migrate

- Rebuild frontend assets after

.envchanges (required whenassets_urlchanges):

npm run build

- Refresh caches and restart workers:

make optimize

docker compose exec -T -u www-data laravel php artisan queue:restart

Environment variables

If the target version introduces new environment variables, compare .env with .env.example and add missing keys before asset/cache/worker commands in production.

Rollback strategy

If something fails after migration:

- Keep maintenance mode on.

- Restore database backup and

.env. - Checkout the previous known-good tag.

- Rebuild dependencies/containers and validate logs before

php artisan up.

3 - API services

Every OpenDataBio installation provide a API service, allowing users to GET data programmatically, and collaborators to POST new data into its database. The service is open access to public data, requires user authentication to POST data or GET data of restricted access.

The OpenDataBio API (Application Programming Interface -API) allows users to interact with an OpenDataBio database for exporting, importing and updating data without using the web-interface.

The OpenDataBio R package is a client for this API, allowing the interaction with the data repository directly from R and illustrating the API capabilities so that other clients can be easily built.

The OpenDataBio R package is a client for this API, allowing the interaction with the data repository directly from R and illustrating the API capabilities so that other clients can be easily built.

The OpenDataBio API allows querying of the database, data importation and data edition (update) through a REST inspired interface. All API requests and responses are formatted in JSON.

The API call

A simple call to the OpenDataBio API has four independent pieces:

[HTTP-verb] + base-URL + endpoint + request-parameters

- HTTP-verb - either

GETfor exports orPOSTfor imports. - base-URL - the URL used to access your OpenDataBio server + plus

/api/v0. For, example,http://opendatabio.inpa.gov.br/api/v0 - endpoint - represents the object or collection of objects that you want to access, for example, for querying taxonomic names, the endpoint is “taxons”

- request-parameters - represent filtering and processing that should be done with the objects, and are represented in the API call after a question mark. For example, to retrieve only valid taxonomic names (non synonyms) end the request with

?valid=1.

The API call above can be entered in a browser to GET public access data. For example, to get the list of valid taxons from an OpenDataBio installation the API request could be:

https://opendb.inpa.gov.br/api/v0/taxons?valid=1&limit=10

When using the OpenDataBio R package this call would be odb_get_taxons(list(valid=1)).

A response would be something like:

{

"meta":

{

"odb_version":"0.9.1-alpha1",

"api_version":"v0",

"server":"http://opendb.inpa.gov.br",

"full_url":"https://opendb.inpa.gov.br/api/v0/taxons?valid=1&limit1&offset=100"},

"data":

[

{

"id":62,

"parent_id":25,

"author_id":null,

"scientificName":"Laurales",

"taxonRank":"Ordem",

"scientificNameAuthorship":null,

"namePublishedIn":"Juss. ex Bercht. & J. Presl. In: Prir. Rostlin: 235. (1820).",

"parentName":"Magnoliidae",

"family":null,

"taxonRemarks":null,

"taxonomicStatus":"accepted",

"ScientificNameID":"http:\/\/tropicos.org\/Name\/43000015 | https:\/\/www.gbif.org\/species\/407",

"basisOfRecord":"Taxon"

}]}

API Authentication

- Not required for getting any data with public access in the ODB database, which by default includes locations, taxons, bibliographic references, persons and traits.

- Authentication Required to GET any data that is not of public access, and is required to POST and PUT data.

- Authentication is done using an

API token, that can be found under your user profile on the web interface. The token is assigned to a single database user, and should not be shared, exposed, e-mailed or stored in version controls. - To authenticate against the OpenDataBio API, use the token in the “Authorization” header of the API request. When using the R client, pass the token to the

odb_configfunctioncfg = odb_config(token="your-token-here"). - The token controls the data you can get and can edit

Users will only have access to the data for which the user has permission and to any data with public access in the database, which by default includes locations, taxons, bibliographic references, persons and traits. Measurements, individuals, and Vouchers access depends on permissions understood by the users token.

API versions

The OpenDataBio API follows its own version number. This means that the client can expect to use the same code and get the same answers regardless of which OpenDataBio version that the server is running. All changes done within the same API version (>= 1) should be backward compatible. Our API versioning is handled by the URL, so to ask for a specific API version, use the version number between the base URL and endpoint:

https://opendatabio.inpa.gov.br/opendatabio/api/v1/taxons

https://opendatabio.inpa.gov.br/opendatabio/api/v2/taxons

3.1 - Quick reference

base-URL + ‘/api/v0/’ + endpoint + ‘?’ + request-parameters

https://opendb.inpa.gov.br/api/v0/taxons?valid=1&limit=2&offset=10

GET DATA (downloads)

Shared get-parameters

When multiple parameters are specified, they are combined with an AND operator. There is no OR parameter option in searches.

The limit and offset parameters can be used to divide your search into parts. Alternatively, use the save_job=T option and then download the data with the get_file=T parameter from the userjobs API.

Some parameters accept an asterisk as wildcard, so api/v0/taxons?name=Euterpe will return taxons with name exactly as “Euterpe”, while api/v0/taxons?name=Eut* will return names starting with “Eut”.

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

limit | No | Maximum number of records to return. | 100 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

Endpoint parameters

| Endpoint | Description | Parameters |

|---|---|---|

| / | Tests your access/token. | — |

| bibreferences | Bibliographic references (GET lists, POST creates). | id, bibkey, biocollection, dataset, fields, job_id, limit, offset, save_job, search, taxon, taxon_root |

| biocollections | Biocollections (GET lists, POST creates). | id, acronym, fields, irn, job_id, limit, name, offset, save_job, search |

| datasets | Datasets and published dataset files (GET lists, POST creates via import job). | id, bibreference, fields, file_name, has_versions, include_url, limit, list_versions, name, offset, project, save_job, search, summarize, tag, tagged_with, taxon, taxon_root, traits |

| individuals | Individuals (GET lists, POST creates, PUT updates). | id, dataset, date_max, date_min, fields, job_id, limit, location, location_root, odbrequest_id, offset, person, project, save_job, tag, taxon, taxon_root, trait, vernacular |

| individual-locations | Occurrences for individuals with multiple locations (GET lists, POST/PUT upserts). | id, dataset, date_max, date_min, fields, individual, limit, location, location_root, offset, person, project, save_job, tag, taxon, taxon_root |

| languages | Lists available interface/data languages. | fields, limit, offset |

| locations | Locations (GET lists, POST creates, PUT updates). | id, adm_level, dataset, fields, job_id, lat, limit, location_root, long, name, offset, parent_id, project, querytype, root, save_job, search, taxon, taxon_root, trait |

| measurements | Trait measurements (GET lists, POST creates/imports via ImportMeasurements job, PUT bulk updates). | id, bibreference, dataset, date_max, date_min, fields, individual, job_id, limit, location, location_root, measured_id, measured_type, offset, person, project, save_job, taxon, taxon_root, trait, trait_type, voucher |

| media | Media metadata (GET lists, POST creates, PUT updates). | id, dataset, fields, individual, job_id, limit, location, location_root, media_id, media_uuid, offset, person, project, save_job, tag, taxon, taxon_root, uuid, voucher |

| persons | People (GET lists, POST creates, PUT updates). | id, abbrev, email, fields, job_id, limit, name, offset, save_job, search |

| projects | Projects (GET lists). | id, fields, job_id, limit, offset, save_job, search, tag |

| taxons | Taxonomic names (GET lists, POST creates). | id, bibreference, biocollection, dataset, external, fields, job_id, level, limit, location_root, name, offset, person, project, root, save_job, taxon_root, trait, valid, vernacular |

| traits | Trait definitions (GET lists, POST creates). | id, bibreference, categories, dataset, fields, job_id, language, limit, name, object_type, offset, save_job, search, tag, taxon, taxon_root, trait, type |

| vernaculars | Vernacular names (GET lists, POST creates). | id, fields, individual, job_id, limit, location, location_root, offset, save_job, taxon, taxon_root |

| vouchers | Voucher specimens (GET lists, POST creates, PUT updates). | id, bibreference, bibreference_id, biocollection, biocollection_id, collector, dataset, date_max, date_min, fields, individual, job_id, limit, location, location_root, main_collector, number, odbrequest_id, offset, person, project, save_job, taxon, taxon_root, trait, vernacular |

| userjobs | Background jobs (imports/exports) (GET lists). | id, fields, get_file, limit, offset, status |

| activities | Lists activity log entries. | id, description, fields, individual, language, limit, location, log_name, measurement, offset, save_job, subject, subject_id, taxon, taxon_root, voucher |

| tags | Tags/keywords (GET lists). | id, dataset, fields, job_id, language, limit, name, offset, project, save_job, search, trait |

POST DATA (imports)

Importing data from files through the web-interface require specifying the POST verb parameters of the ODB API

| Endpoint | Description | Parameters |

|---|---|---|

| bibreferences | Bibliographic references (GET lists, POST creates). | bibtex, doi |

| biocollections | Biocollections (GET lists, POST creates). | acronym, name |

| individuals | Individuals (GET lists, POST creates, PUT updates). | altitude, angle, biocollection, biocollection_number, biocollection_type, collector, dataset, date, distance, identification_based_on_biocollection, identification_based_on_biocollection_number, identification_date, identification_individual, identification_notes, identifier, latitude, location, location_date_time, location_notes, longitude, modifier, notes, tag, taxon, x, y |

| individual-locations | Occurrences for individuals with multiple locations (GET lists, POST/PUT upserts). | altitude, angle, distance, individual, latitude, location, location_date_time, location_notes, longitude, x, y |

| locations | Locations (GET lists, POST creates, PUT updates). | adm_level, altitude, azimuth, datum, geojson, geom, ismarine, lat, long, name, notes, parent, startx, starty, x, y |

| locations-validation | Validates coordinates against registered locations (POST). | latitude, longitude |

| measurements | Trait measurements (GET lists, POST creates/imports via ImportMeasurements job, PUT bulk updates). | bibreference, dataset, date, duplicated, link_id, location, notes, object_id, object_type, parent_measurement, person, trait_id, value |

| media | Media metadata (GET lists, POST creates, PUT updates). | collector, dataset, date, filename, latitude, license, location, longitude, notes, object_id, object_type, project, tags, title_en, title_pt |

| persons | People (GET lists, POST creates, PUT updates). | abbreviation, biocollection, email, full_name, institution |

| taxons | Taxonomic names (GET lists, POST creates). | author, author_id, bibreference, gbif, ipni, level, mobot, mycobank, name, parent, person, valid, zoobank |

| traits | Trait definitions (GET lists, POST creates). | bibreference, categories, description, export_name, link_type, name, objects, parent, range_max, range_min, tags, type, unit, value_length, wavenumber_max, wavenumber_min |

| vernaculars | Vernacular names (GET lists, POST creates). | citations, individuals, language, name, notes, parent, taxons, type |

| vouchers | Voucher specimens (GET lists, POST creates, PUT updates). | biocollection, biocollection_number, biocollection_type, collector, dataset, date, individual, notes, number |

| datasets | Datasets and published dataset files (GET lists, POST creates via import job). | description, license, name, privacy, project_id, title |

PUT DATA (updates)

Only the endpoints listed below can be updated using the API and only the listed PUT fields can be updated on each endpoint.

Field values are as explained for the POST API endpoints, except that in all cases you must also provide the id of the record to be updated.

| Endpoint | Description | Parameters |

|---|---|---|

| individuals | Individuals (GET lists, POST creates, PUT updates). | id, collector, dataset, date, identification_based_on_biocollection, identification_based_on_biocollection_number, identification_date, identification_individual, identification_notes, identifier, individual_id, modifier, notes, tag, taxon |

| individual-locations | Occurrences for individuals with multiple locations (GET lists, POST/PUT upserts). | id, altitude, angle, distance, individual, individual_location_id, latitude, location, location_date_time, location_notes, longitude, x, y |

| locations | Locations (GET lists, POST creates, PUT updates). | id, adm_level, altitude, datum, geom, ismarine, lat, location_id, long, name, notes, parent, startx, starty, x, y |

| measurements | Trait measurements (GET lists, POST creates/imports via ImportMeasurements job, PUT bulk updates). | id, bibreference, dataset, date, duplicated, link_id, location, measurement_id, notes, object_id, object_type, parent_measurement, person, trait_id, value |

| media | Media metadata (GET lists, POST creates, PUT updates). | id, collector, dataset, date, latitude, license, location, longitude, media_id, media_uuid, notes, project, tags, title_en, title_pt |

| persons | People (GET lists, POST creates, PUT updates). | id, abbreviation, biocollection, email, full_name, institution, person_id |

| vouchers | Voucher specimens (GET lists, POST creates, PUT updates). | id, biocollection, biocollection_number, biocollection_type, collector, dataset, date, individual, notes, number, voucher_id |

Nomenclature types

| Nomenclature types numeric codes | |

|---|---|

| NotType : 0 | Isosyntype : 8 |

| Type : 1 | Neotype : 9 |

| Holotype : 2 | Epitype : 10 |

| Isotype : 3 | Isoepitype : 11 |

| Paratype : 4 | Cultivartype : 12 |

| Lectotype : 5 | Clonotype : 13 |

| Isolectotype : 6 | Topotype : 14 |

| Syntype : 7 | Phototype : 15 |

Taxonomic ranks

| Code | Rank |

|---|---|

| -100 | clade |

| 0 | kingdom |

| 10 | subkingd. |

| 30 | div., phyl., phylum, division |

| 40 | subdiv. |

| 60 | cl., class |

| 70 | subcl., subclass |

| 80 | superord., superorder |

| 90 | ord., order |

| 100 | subord. |

| 120 | fam., family |

| 130 | subfam., subfamily |

| 150 | tr., tribe |

| 180 | gen., genus |

| 190 | subg., subgenus, sect. |

| 210 | section, sp., spec., species |

| 220 | subsp., subspecies |

| 240 | var., variety |

| 270 | f., fo., form |

3.2 - GET data

- The OpenDataBio-R package is a client for this API.

- Query examples in R are here;

- No authentication is needed to access data with a public access policy

- Authentication token is required only to get data with a non-public access policy

Shared GET parameters

When multiple parameters are specified, they are combined with an AND operator. There is no OR parameter option in searches.

The limit and offset parameters can be used to divide your search into parts. Alternatively, use the save_job=T option and then download the data with the get_file=T parameter from the userjobs API.

Some parameters accept an asterisk as wildcard, so api/v0/taxons?name=Euterpe will return taxons with name exactly as “Euterpe”, while api/v0/taxons?name=Eut* will return names starting with “Eut”.

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

limit | No | Maximum number of records to return. | 100 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

GET endpoints

Quick links

- /

- bibreferences

- biocollections

- datasets

- individuals

- individual-locations

- languages

- locations

- measurements

- media

- persons

- projects

- taxons

- traits

- vernaculars

- vouchers

- userjobs

- activities

- tags

/ (GET)

Tests your access/token.

No parameters for this endpoint.

bibreferences (GET)

Bibliographic references (GET lists, POST creates).

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

bibkey | No | Bibreference key or list of keys. | ducke1953,mayr1992 |

biocollection | No | Biocollection id/name/acronym; returns references cited by vouchers in those collections. | INPA |

dataset | No | Dataset id or name; returns bibreferences linked to the dataset. | Forest1 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

job_id | No | Job id to reuse affected ids or filter results from a job. | 1024 |

limit | No | Maximum number of records to return. | 100 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

search | No | Full-text search on bibtex using boolean mode; spaces act as AND. | Amazon forest |

taxon | No | Taxon id or canonical name list; matches references linked to the taxon. | Ocotea guianensis or 120,455 |

taxon_root | No | Taxon id/name including descendants. | Lauraceae |

Fields returned

Fields (simple): id, bibkey, year, author, title, doi, url, bibtex

Fields (all): id, bibkey, year, author, title, doi, url, bibtex

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 2,

"bibkey": "Riberiroetal1999FloraDucke",

"year": 1999,

"author": "José Eduardo Lahoz Da Silva Ribeiro and Michael John Gilbert Hopkins and Alberto Vicentini and Cynthia Anne Sothers and Maria Auxiliadora Da Silva Costa and Joneide Mouzinho De Brito and Maria Anália Duarte De Souza and Lúcia Helena Pinheiro Martins and Lúcia Garcez Lohmann and Paulo Apóstolo Costa Lima Assunção and Everaldo Da Costa Pereira and Cosme Fernandes Da Silva and Mariana Rabello Mesquita and Lilian Costa Procópio",

"title": "Flora Da Reserva Ducke: Guia De Identificação Das Plantas Vasculares De Uma Floresta De Terra Firme Na Amazônica Central",

"doi": null,

"url": null,

"bibtex": "@Article{Riberiroetal1999FloraDucke,\r\n title = {Flora da Reserva Ducke: Guia de Identifica{\\c{c}}{\\~a}o das Plantas Vasculares de uma Floresta de Terra Firme na Amaz{\\^o}nica Central},\r\n author = {José Eduardo Lahoz da Silva Ribeiro and Michael John Gilbert Hopkins and Alberto Vicentini and Cynthia Anne Sothers and Maria Auxiliadora da Silva Costa and Joneide Mouzinho de Brito and Maria Anália Duarte de Souza and Lúcia Helena Pinheiro Martins and Lúcia Garcez Lohmann and Paulo Apóstolo Costa Lima Assunç{ã}o and Everaldo da Costa Pereira and Cosme Fernandes da Silva and Mariana Rabello Mesquita and Lilian Costa Procópio},\r\n journal = {Flora da Reserva Ducke: Guia de Identifica{\\c{c}}{\\~a}o das Plantas Vasculares de uma Floresta de Terra Firme na Amaz{\\^o}nica Central},\r\n year = {1999},\r\n publisher = {INPA-DFID Manaus},\r\n pages = {819p},\r\n}"

},

{

"id": 3,

"bibkey": "Sutter2006female",

"year": 2006,

"author": "D. Merino Sutter and P. I. Forster and P. K. Endress",

"title": "Female Flowers And Systematic Position Of Picrodendraceae (Euphorbiaceae S.l., Malpighiales)",

"doi": "10.1007/s00606-006-0414-0",

"url": "http://dx.doi.org/10.1007/s00606-006-0414-0",

"bibtex": "@article{Sutter2006female,\n author = {D. Merino Sutter and P. I. Forster and P. K. Endress},\n year = {2006},\n title = {Female flowers and systematic position of Picrodendraceae (Euphorbiaceae s.l., Malpighiales)},\n issn = {0378-2697 | 1615-6110},\n issue = {1-4},\n url = {http://dx.doi.org/10.1007/s00606-006-0414-0},\n doi = {10.1007/s00606-006-0414-0},\n volume = {261},\n page = {187-215},\n journal = {Plant Systematics and Evolution},\n journal_short = {Plant Syst. Evol.},\n published = {Springer Science and Business Media LLC}\n}"

}

]

}biocollections (GET)

Biocollections (GET lists, POST creates).

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

acronym | No | Biocollection acronym. | INPA |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

irn | No | Index Herbariorum IRN for filtering biocollections. | 123456 |

job_id | No | Job id to reuse affected ids or filter results from a job. | 1024 |

limit | No | Maximum number of records to return. | 100 |

name | No | Exact biocollection name (string). | Instituto Nacional de Pesquisas da Amazônia |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

search | No | Full-text search parameter. | Silva |

Fields returned

Fields (simple): id, acronym, name, irn

Fields (all): id, acronym, name, irn, country, city, address

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 1,

"acronym": "INPA",

"name": "Instituto Nacional de Pesquisas da Amazônia",

"irn": 124921,

"country": null,

"city": null,

"address": null

},

{

"id": 2,

"acronym": "SPB",

"name": "Universidade de São Paulo",

"irn": 126324,

"country": null,

"city": null,

"address": null

}

]

}datasets (GET)

Datasets and published dataset files (GET lists, POST creates via import job).

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

bibreference | No | Bibreference id or bibkey. | 34 or ducke1953 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

file_name | No | Dataset version file name to download. | 2_Organisms.csv |

has_versions | No | When 1, returns only datasets that have public versions. | 1 |

include_url | No | When 1 with list_versions, include file download URL. | 1 |

limit | No | Maximum number of records to return. | 100 |

list_versions | No | If true, lists dataset version files for given id(s). | 1 |

name | No | Translatable trait name. Accepts a plain string or a JSON map of language codes to names. | {"en":"Height","pt-br":"Altura"} |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

project | No | Project id or acronym. | PDBFF or 2 |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

search | No | Full-text search parameter. | Silva |

summarize | No | Dataset id to return content/taxonomic/trait summaries. | 3 |

tag | No | Individual tag/number/code. | A-1234 |

tagged_with | No | Tag ids (comma) or text to filter datasets by tags (supports id list or full-text). | 12,13 or canopy leaf |

taxon | No | Taxon id or canonical full name list. | Licaria cannela or 456,789 |

taxon_root | No | Taxon id/name including descendants. | Lauraceae |

traits | No | Trait ids list (comma-separated) for filtering datasets. | 12,15 |

Fields returned

Fields (simple): id, name, title, projectName, description, notes, contactEmail, taggedWidth, uuid

Fields (all): id, name, title, projectName, notes, privacyLevel, policy, description, measurements_count, contactEmail, taggedWidth, uuid

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 4,

"name": "PDBFF-FITO 1ha core plots 1-10cm dbh - TREELETS",

"title": "Arvoretas (1cm>DAP",

"projectName": "Projeto Dinâmica Biológica de Fragmentos Florestais (PDBFF-Data)",

"notes": null,

"privacyLevel": "Restrito a usuários autorizados",

"policy": null,

"description": "Contém o único censo de árvores de pequeno porte 1-10cm de diâmetro nas parcelas de 1ha do PDBFF, em 11 das 69 de parcelas permanentes de 1ha do Programa de Monitoramento de Plantas do PDBFF.",

"measurements_count": null,

"contactEmail": "example",

"taggedWidth": "Parcelas florestais | PDBFF | Fitodemográfico",

"uuid": "e1d8ce8d-4847-11f0-8e9f-9cb654b86224"

}

]

}individuals (GET)

Individuals (GET lists, POST creates, PUT updates).

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

dataset | No | Dataset id/name, filter records that belong to the dataset informed | 3 or FOREST1 |

date_max | No | Inclusive end date (YYYY-MM-DD) compared against individual date. | 2024-12-31 |

date_min | No | Inclusive start date (YYYY-MM-DD) compared against individual date. | 2020-01-01 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

job_id | No | Job id to reuse affected ids or filter results from a job. | 1024 |

limit | No | Maximum number of records to return. | 100 |

location | No | Location id/name list; matches individuals at those exact locations. | Parcela 25ha or 55,60 |

location_root | No | Location id/name; includes descendants of the informed locations. | Parcela 25ha get subplots in this case |

odbrequest_id | No | Request id to filter individuals linked to that ODB request. | 12 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

person | No | Collector person id/name/email list; filters main/associated collectors. | Silva, J.B. or 23,10 |

project | No | Project id/name; matches records whose dataset belongs to the project. | PDBFF |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

tag | No | Individual tag/number filter; supports list separated by comma. | A-123,2001 |

taxon | No | Taxon id/name list; matches identification taxon only (no descendants). | Licaria guianensis,Minquartia guianensis or 456,457 |

taxon_root | No | Taxon id/name list; includes descendants of each taxon. | Lauraceae,Fabaceae or 10,20 |

trait | No | Trait id list; only used together with dataset to filter by measurements. | 12,15 |

vernacular | No | Vernacular id/name list to match linked vernaculars. | castanha|12 |

Fields returned

Fields (simple): id, basisOfRecord, organismID, recordedByMain, recordNumber, recordedDate, family, scientificName, identificationQualifier, identifiedBy, dateIdentified, locationName, locationParentName, decimalLatitude, decimalLongitude, x, y, gx, gy, angle, distance, datasetName

Fields (all): id, basisOfRecord, organismID, recordedByMain, recordNumber, recordedDate, recordedBy, scientificName, scientificNameAuthorship, taxonPublishedStatus, genus, family, identificationQualifier, identifiedBy, dateIdentified, identificationRemarks, identificationBiocollection, identificationBiocollectionReference, locationName, higherGeography, decimalLatitude, decimalLongitude, georeferenceRemarks, locationParentName, x, y, gx, gy, angle, distance, organismRemarks, datasetName, uuid

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 306246,

"basisOfRecord": "Organism",

"organismID": "2639_Spruce_1852",

"recordedByMain": "Spruce, R.",

"recordNumber": "2639",

"recordedDate": "1852-10",

"recordedBy": "Spruce, R.",

"scientificName": "Ecclinusa lanceolata",

"scientificNameAuthorship": "(Mart. & Eichler) Pierre",

"taxonPublishedStatus": "published",

"genus": "Ecclinusa",

"family": "Sapotaceae",

"identificationQualifier": "",

"identifiedBy": "Spruce, R.",

"dateIdentified": "1852-10-00",

"identificationRemarks": "",

"identificationBiocollection": null,

"identificationBiocollectionReference": null,

"locationName": "São Gabriel da Cachoeira",

"higherGeography": "São Gabriel da Cachoeira < Amazonas < Brasil",

"decimalLatitude": 1.1841927,

"decimalLongitude": -66.80167715,

"georeferenceRemarks": "decimal coordinates are the CENTROID of the footprintWKT geometry",

"locationParentName": "Amazonas",

"x": null,

"y": null,

"gx": null,

"gy": null,

"angle": null,

"distance": null,

"organismRemarks": "prope Panure ad Rio Vaupes Amazonas, Brazil",

"datasetName": "Exsicatas LABOTAM",

"uuid": "c01000f0-f437-11ef-b90b-9cb654b86224"

}

]

}individual-locations (GET)

Occurrences for individuals with multiple locations (GET lists, POST/PUT upserts).

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

dataset | No | Dataset id/name; filters by dataset of the linked individual. | FOREST1 |

date_max | No | Upper bound date/time; compares date_time or individual date when empty. | 2024-12-31 |

date_min | No | Lower bound date/time; compares date_time or individual date when empty. | 2020-01-01 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

individual | No | Individual id list whose occurrences will be returned. | 12,44 |

limit | No | Maximum number of records to return. | 100 |

location | No | Location id or name. | Parcela 25ha or 55 |

location_root | No | Location id/name with descendants included. | Amazonas or 10 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

person | No | Collector person id/name/email list; filters by individual collectors. | J.Silva|23 |

project | No | Project id/name; matches occurrences whose individual belongs to datasets in project. | PDBFF |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

tag | No | Individual tag/number list; matches by individuals.tag column | A-123,B-2 |

taxon | No | Taxon id or canonical full name list. | Licaria cannela or 456,789 |

taxon_root | No | Taxon id/name including descendants. | Lauraceae |

Fields returned

Fields (simple): id, individual_id, basisOfRecord, occurrenceID, organismID, recordedDate, locationName, higherGeography, decimalLatitude, decimalLongitude, x, y, angle, distance, minimumElevation, occurrenceRemarks, scientificName, family, datasetName

Fields (all): id, individual_id, basisOfRecord, occurrenceID, organismID, scientificName, family, recordedDate, locationName, higherGeography, decimalLatitude, decimalLongitude, georeferenceRemarks, x, y, angle, distance, minimumElevation, occurrenceRemarks, organismRemarks, datasetName

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 306244,

"individual_id": 306246,

"basisOfRecord": "Occurrence",

"occurrenceID": "2639_Spruce_1852.1852-10",

"organismID": "2639_Spruce_1852",

"scientificName": "Ecclinusa lanceolata",

"family": "Sapotaceae",

"recordedDate": "1852-10",

"locationName": "São Gabriel da Cachoeira",

"higherGeography": "Brasil > Amazonas > São Gabriel da Cachoeira",

"decimalLatitude": 1.1841927,

"decimalLongitude": -66.80167715,

"georeferenceRemarks": "decimal coordinates are the CENTROID of the footprintWKT geometry",

"x": null,

"y": null,

"angle": null,

"distance": null,

"minimumElevation": null,

"occurrenceRemarks": null,

"organismRemarks": "prope Panure ad Rio Vaupes Amazonas, Brazil",

"datasetName": "Exsicatas LABOTAM"

}

]

}languages (GET)

Lists available interface/data languages.

| Parameter | Required | Description | Example |

|---|---|---|---|

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

limit | No | Maximum number of records to return. | 100 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 1,

"code": "en",

"name": "English",

"is_locale": 1,

"created_at": null,

"updated_at": null

}

]

}locations (GET)

Locations (GET lists, POST creates, PUT updates).

| Parameter | Required | Description | Example |

|---|---|---|---|

id | No | Single id or comma-separated list to filter or target records. | 1,2,3 |

adm_level | No | One or more adm_level codes | 10,100 |

dataset | No | Dataset id/name; expands to all locations used by that dataset. | FOREST1 |

fields | No | Comma separated list of the fields to include in the response or special words all/simple/raw, default to simple | id,scientificName or all |

job_id | No | Job id to reuse affected ids or filter results from a job. | 1024 |

lat | No | Latitude (decimal degrees) used with querytype. | -3.11 |

limit | No | Maximum number of records to return. | 100 |

location_root | No | Alias of root for compatibility. | Amazonas |

long | No | Longitude (decimal degrees) used with querytype. | -60.02 |

name | No | Exact name match; accepts list of names or ids. | Manaus or 10 |

offset | No | The starting position of the record set to be exported. Used together with limit to limit results. | 10000 |

parent_id | No | Parent id for hierarchical queries. | 210 |

project | No | Project id or acronym. | PDBFF or 2 |

querytype | No | When lat/long are provided: exact|parent|closest geometric search. | parent |

root | No | Location id/name; returns it and all descendants and related locations | Amazonas or "Parque Nacional do Jaú" ... |

save_job | No | If 1, save the results as file to download later via userjobs + get_file = T | 1 |

search | No | Prefix search on name (SQL LIKE name%). | Mana search for names that starts "mana" |

taxon | No | Taxon id/name list; filters locations by linked identifications. | Euterpe precatoria |

taxon_root | No | Taxon id/name list; includes descendants when filtering linked identifications. | Euterpe - finds alls records that belongs to this genus |

trait | No | Trait id/name; only works together with dataset to filter by measurements. | DBH |

Fields returned

Fields (simple): id, basisOfRecord, locationName, adm_level, country_adm_level, x, y, startx, starty, distance_to_search, parent_id, parentName, higherGeography, footprintWKT, locationRemarks, decimalLatitude, decimalLongitude, georeferenceRemarks, geodeticDatum

Fields (all): id, basisOfRecord, locationName, adm_level, country_adm_level, x, y, startx, starty, distance_to_search, parent_id, parentName, higherGeography, footprintWKT, locationRemarks, decimalLatitude, decimalLongitude, georeferenceRemarks, geodeticDatum

Response example

{

"meta": {

"odb_version": "0.10.0-alpha1",

"api_version": "v0",

"server": "http://localhost/opendatabio"

},

"data": [

{

"id": 27297,

"basisOfRecord": "Location",

"locationName": "Parcela 1105",

"adm_level": 100,

"country_adm_level": "Parcela",

"x": "100.00",

"y": "100.00",

"startx": null,

"starty": null,

"distance_to_search": null,

"parent_id": 27277,

"parentName": "Fazenda Esteio",

"higherGeography": "Brasil > Amazonas > Rio Preto da Eva > Fazenda Esteio > Parcela 1105",

"footprintWKT": "POLYGON((-59.81371985 -2.42215752,-59.81360263 -2.42126619,-59.81270751 -2.42136656,-59.81282469 -2.42225788,-59.81371985 -2.42215752))",

"locationRemarks": "source: Polígono desenhado a partir das coordenadas de GPS dos vértices; georeferencedBy: Diogo Martins Rosa & Ana Andrade; fundedBy: Edital CNPq-Brasil/LBA 458027/2013-8; geometryBy: Alberto Vicentini; geometryDate: 2021-09-29; warning: Conflito com polígono da UC de 2021. Este polígono deveria ter a mesma geometria do polígono correspondente que faz parte da UC ARIE PDBFF, mas como ele foi gerado pelas coordenadas de campo, foi mantida essa geometria. A UC, portanto, não protege adequadamente essa parcela de monitoramento.",

"decimalLatitude": -2.42215752,

"decimalLongitude": -59.81371985,

"georeferenceRemarks": "decimal coordinates are the START POINT in footprintWKT geometry",

"geodeticDatum": null

}

]

}measurements (GET)